Safe playgrounds break more arms

When playgrounds install softer surfaces, kids break MORE arms. Kids see rubber and think they're safe. So they climb higher and jump from places they wouldn't have before.

But broken arms aren't broken necks. Good playground design isn't about eliminating injury, it's about padding the things that cause broken necks, and leaving enough real risk so that kids stay and learn to climb.

If we pad the floor, risk doesn't disappear.

It relocates.

Same thing happens with AI governance.

Lock down the models, stand up an approval committee, and funnel every decision through a Center of Excellence. The official playground gets boring enough that people climb the fence, and start running the actual work on personal accounts where nobody's watching. That opens you up to broken-neck risks.

So, how should we respond?

Pad everything?

In March 2023, Samsung gave teams ChatGPT. In the first 20 days, there were three separate leaks: An engineer pasted semiconductor source code into the chatbot to debug it. Another fed in confidential meeting transcripts. A third uploaded chip defect test sequences. Samsung's response was a blanket ban. ChatGPT, Bard, Bing — all blocked. Go around the blockade and you might get fired. After 20 months of lockdown, Samsung's labor union was writing to the executive chairman asking for ChatGPT Enterprise back, "We should not waste our eight hours every day."

Only pad the neck breakers?

JPMorgan banned ChatGPT a few months earlier for the same reasons. But they didn't stop there. By July 2024, they'd built LLM Suite — an internal portal routing OpenAI and Anthropic models through their own infrastructure. Today, 250,000 employees have access. Half use it daily. CIO Lori Beer said the question is, "what's the right level to create an agent; how do you give them identity and access?" In HR, a human gets broader data access than an agent. The agent operates inside specific bounds. They padded the neck breakers. Left the arm breakers alone.

Most governance failures aren't about allowing too much risk.

They're about padding the wrong things.

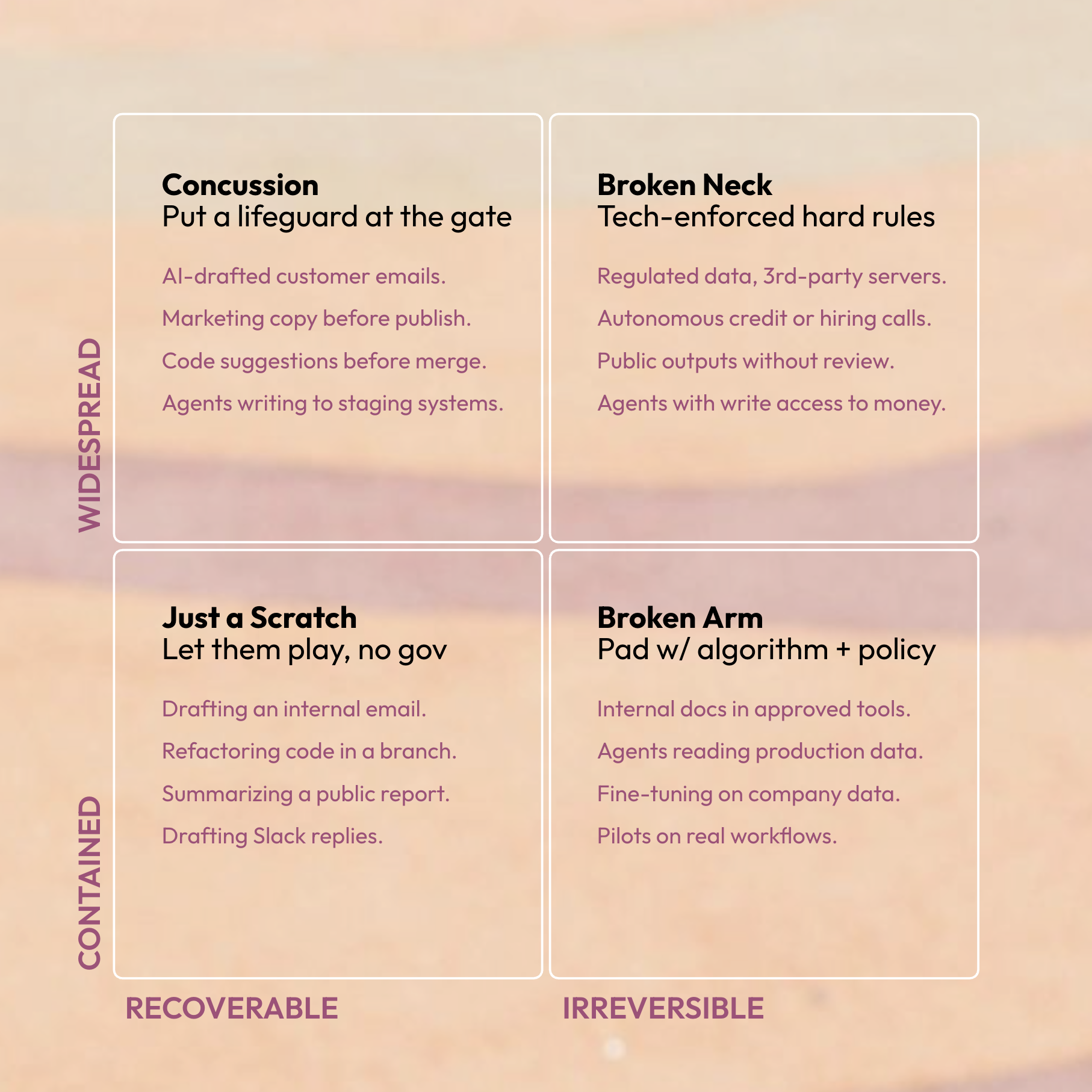

Pad the neck breakers: the catastrophic and irreversible risks. Like, regulated data leaving the building, autonomous decisions in life-affecting domains, or outputs that hit customers without review.

Let arm-breakers flourish: the small or recoverable risks. Like, a prompt experiment, a team building an internal agent on approved rails, or a draft that gets human-reviewed before it ships.

If a company treats every fall like it's the last one, nobody will learn to climb.